Why Enterprise AI Needs Real Business Context

There is a recurring frustration with modern enterprise AI deployments: the assistant sounds intelligent, yet remains functionally "hollow." When put to the test, these systems often fail to answer specific questions about the actual business because they lack access to operational reality. Having customer data, inventory levels, and live project statuses in silos means nothing if the AI is disconnected from those systems.

The Model Context Protocol (MCP) addresses this by creating a standardized gateway for AI assistants to retrieve information from enterprise systems on demand. Rather than simply "feeding" more data into a model, an MCP-compatible data layer enables AI to query the right sources at the right time, through governed channels that respect security policies and compliance requirements.

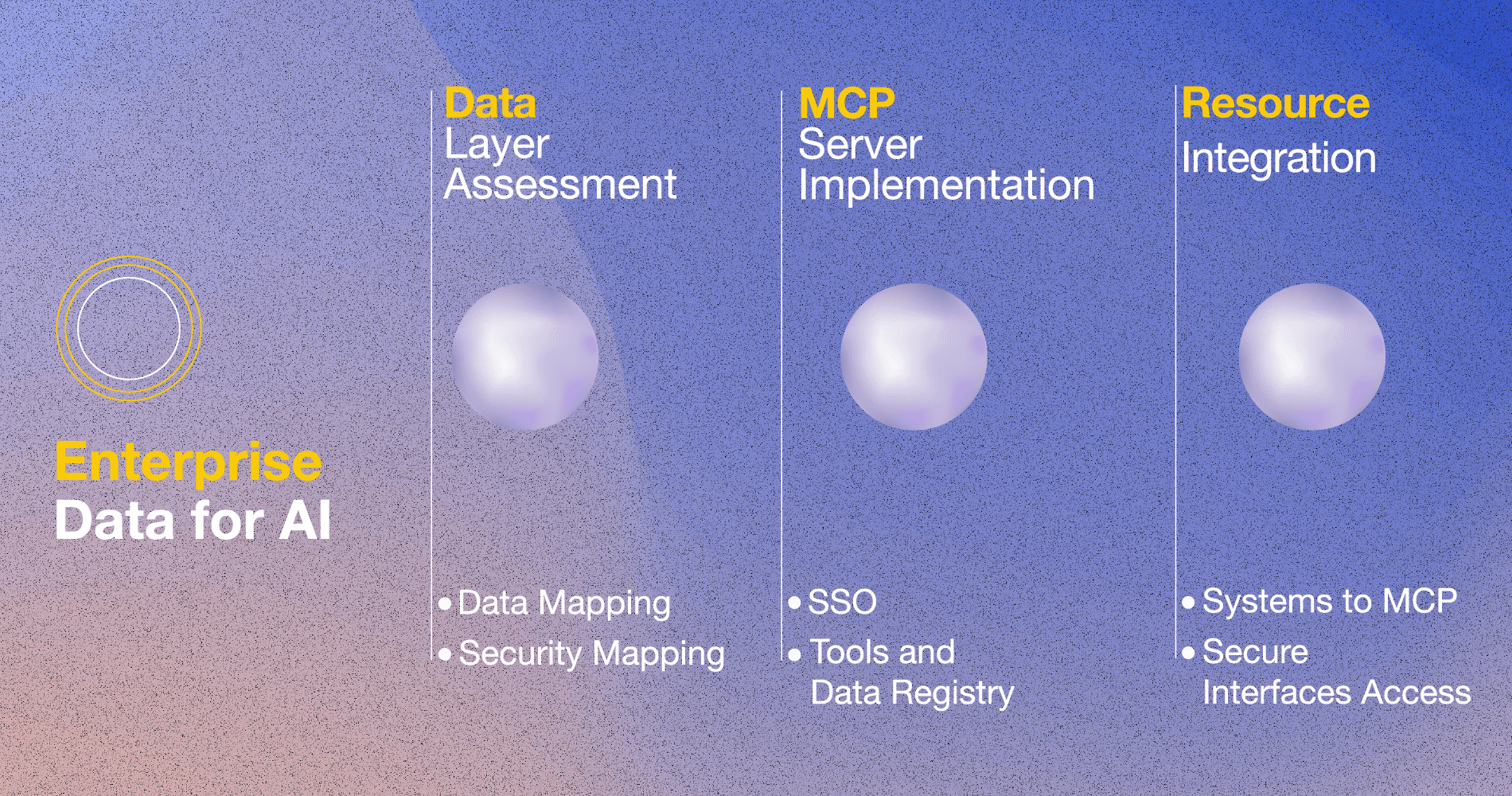

Three Phases

Businesses can approach this integration in three phases, depending on where they currently stand.

Data layer assessment and strategy begin with a map of the enterprise landscape. This map indicates which systems hold critical data, where friction points exist, and which security boundaries must be respected. It is a top-down overview of the whole system that identifies high-impact opportunities.

For example, a financial service firm has customer data scattered across a legacy CRM and regional databases, each with its own document system. AI assistants can be useful here, but with established clarity on which data sources can be safely exposed and with what governance in place.

MCP server implementation involves establishing the actual infrastructure. A secure MCP server comes with enterprise single sign-on and a registry of available tools and data sources. This access layer shows AI assistants what they're permitted to use and how to retrieve context.

Instead of building custom integrations for each assistant or region, a multinational manufacturer with a field technician system would deploy a single MCP server. It would handle authentication, permission management, and ticketing, among other systems. When a technician asks their AI assistant about a machine error code, the request flows through this layer and returns structured data that the assistant can work with.

Resource integration connects individual systems to the MCP layer, making each accessible via secure, standardized interfaces. Integrations are tested to ensure they respond reliably to queries and respect access controls.

For example, a healthcare organization might enable an AI assistant to answer questions about lab results without improperly exposing protected health information. As confidence builds, they could add pharmacy inventory, billing records, and clinical guidelines. Still, the entire system would flow through a single, governed layer, reusable across departments and future AI applications.

AI as an Operational Asset

By shifting enterprise AI from novelty to an operational asset, assistants become context-aware without massive data migrations or model retraining. Most importantly, organizations gain a foundation that outlasts any single model or vendor, because the access layer and integrations remain valuable as AI technology evolves.

So, it is probably time to move the conversations from "Can AI do this?" to "Which capabilities should AI access, and under what conditions?" That is a far more productive question for enterprises managing risk and innovation.